Padauk — Open-Source Burmese-First Agentic AI Assistant

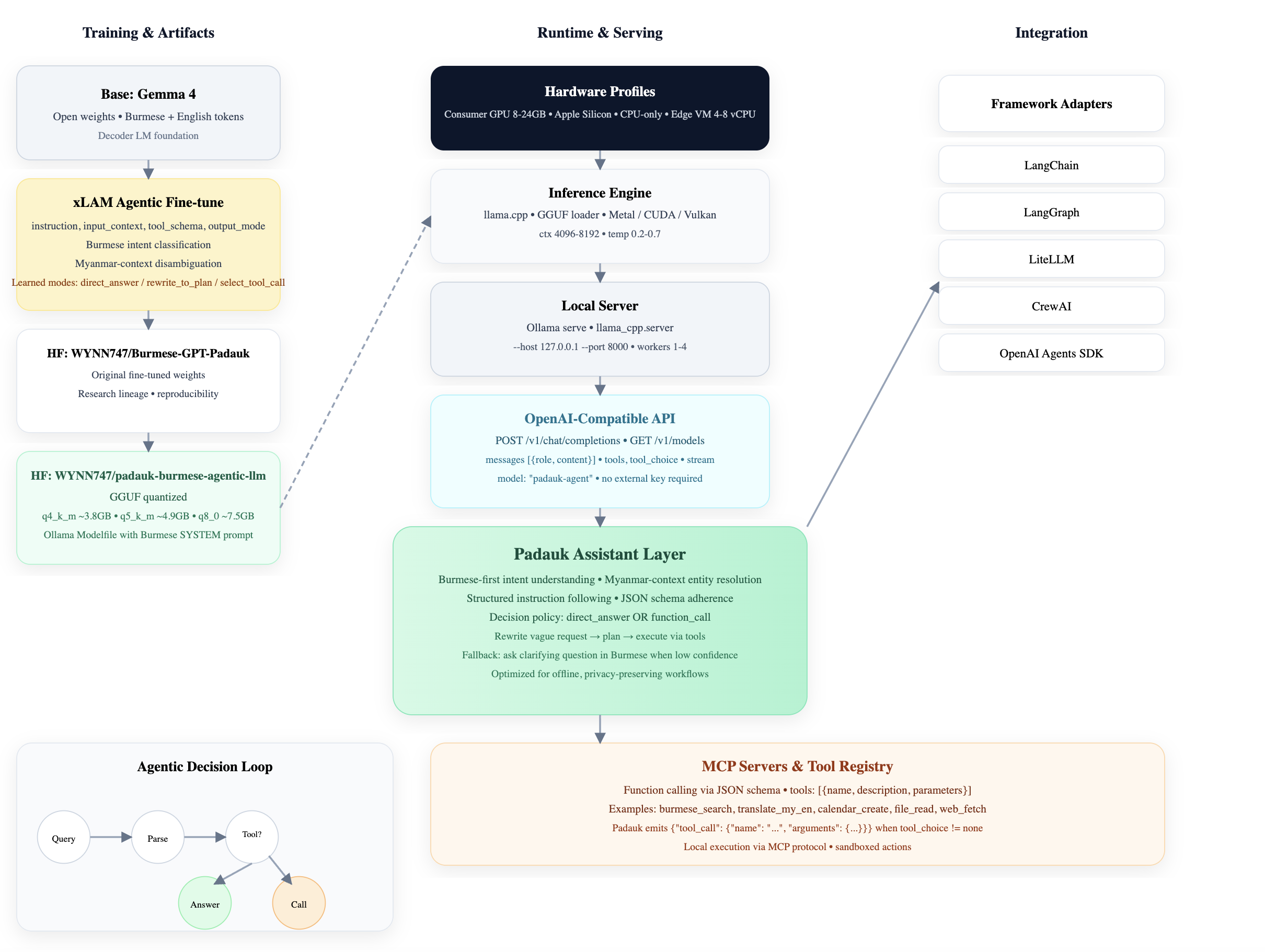

Padauk is built to be useful, not just plausible. The first milestone was Burmese language generation; the next is Burmese-first daily work. It is based on Gemma 4, then optimized with xLAM-style data for decision-aware conversation, tool use, and low-resource deployment.

This open-source system supports a practical runtime path: GGUF artifacts, Ollama serving, and local OpenAI-compatible APIs so teams can run assistants and automations without depending on expensive remote stacks.

From script-level Burmese to agentic Burmese-language optimization for practical daily use

Burmese AI moved from proving it can generate text to proving it can help people complete real tasks. Padauk focuses on that second step: planning, restructuring unclear requests, summarizing, drafting, and acting.

It is intentionally positioned as an assistant layer, not just a benchmark submission, with behavior that prefers useful action over decorative responses.

What Padauk is optimized for

Burmese intent

Burmese and English context handling with focus on Myanmar usage patterns, indirect requests, and task sequencing in daily workflows.

Tool-aware behavior

Designed to reason when to answer directly and when to pass tasks to tools, APIs, or downstream agents for completion.

Local-first operations

Built around realistic deployment: GGUF quantization, Ollama integration, and OpenAI-compatible local endpoints.

Two artifacts, one practical runtime path

The project ships practical runtime artifacts for application use, while preserving research lineage for reproducibility and continued adaptation.

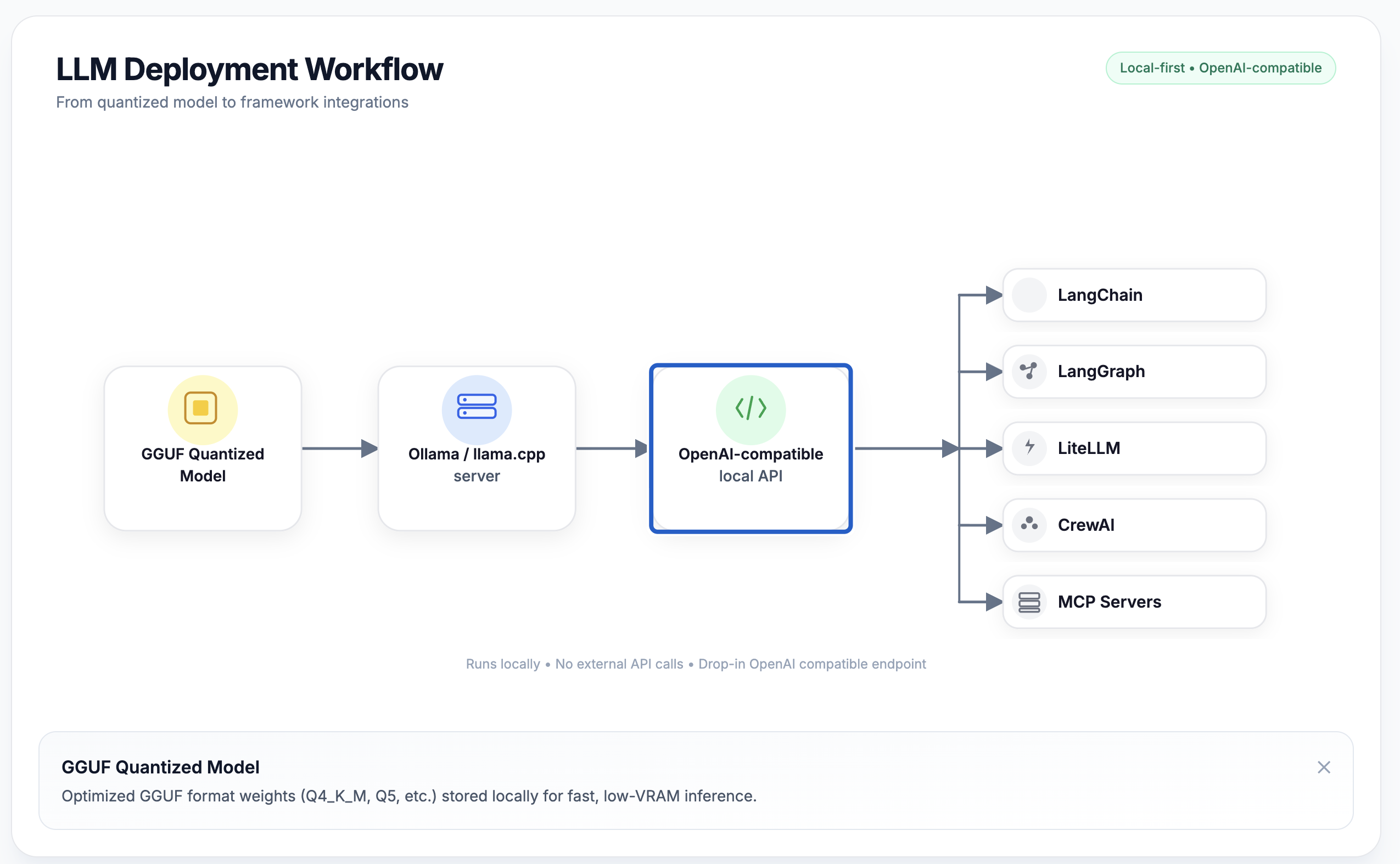

From artifact to agent workflow

In production, Padauk is typically used in a local-compatible chain: GGUF artifact → Ollama / local runtime → OpenAI-compatible API → app or MCP-connected assistant frameworks.

- • Model path: Gemma 4 base + xLAM-style adaptation

- • Serving: GGUF quantized model with Ollama-compatible local runtime

- • Integration: OpenAI-compatible API for frameworks and automation tools

- • Orchestration: LangChain / LangGraph / LiteLLM / CrewAI / MCP registries

Daily work, not only prompts

Companion writing

Draft and polish Burmese and English messages, turn rough notes into clean summaries, and format outputs into reusable checklists.

Automation helper

Decide between direct answers and structured next steps for small daily workflows and routine task chaining.

Model ecosystems

Use alongside Burmese GPT and Burmese-Coder-4B as a practical assistant layer for practical Myanmar AI stacks.

Live Model Arena

Try the live assistant below. The interface is wired for local-first workflows and practical task-oriented usage.

Project links

Frequently Asked Questions

What is Padauk?

An open-source, Burmese-first practical AI assistant designed for daily work with agentic behavior and local deployment.

How is this different from earlier Burmese models?

Padauk emphasizes agentic behavior, practical task execution, and deployment portability over generic language fluency.

Where can I run Padauk?

Anywhere local inference is available. It supports GGUF, Ollama, and local OpenAI-compatible endpoints for self-hosted use.

Is Padauk free?

The page, model artifacts, and documented stacks are open for practical integration and experimentation.